Retract Until Verified

The Implausibly Large Effect in the Recent Time-of-Day Immunotherapy Trial

A new Nature Medicine phase 3 trial claims that simply giving first-line anti–PD-1 immunotherapy before 3 pm instead of after nearly doubles survival for advanced lung cancer (specifically, non–small cell lung cancer or NSCLC for short). That’s not a small effect. It’s the same magnitude of benefit we see when we introduce immunotherapy at all in landmark NSCLC trials like KEYNOTE-189.

When an effect that large emerges from something as mundane as clock time, the responsible posture is verification. Extraordinary effects demand extraordinary level of evidence. But when that extraordinary effect is paired with multiple red flags, the Time-of-Day Immunotherapy trial raises not just skepticism, but the possibility that the findings may be unreliable or worse, fabricated.

Until independently audited, this study should be retracted until verified.

The Effect Size Strains Credibility

The Nature Medicine immunotherapy trial reports hazard ratios for progression-free and overall survival of roughly 0.40 for patients receiving infusions before 3 pm compared with those treated later in the day. Median overall survival differs by more than eleven months.

Those hazard ratios live in the same neighborhood as the landmark trials that introduced immunotherapy into first-line treatment for metastatic NSCLC. In KEYNOTE-189, adding immunotherapy (pembrolizumab) to chemotherapy fundamentally altered survival curves. That was the effect of a drug.

Here, the claim is that timing alone produces a comparable magnitude of benefit.

Circadian biology is real. Immune function varies by time of day. But when a scheduling variable appears to generate a survival benefit on par with drug innovation, the prior probability that bias, artifact, imbalance, analytic flexibility, and yes, potentially more serious data integrity problems, explains the result is high.

Independent scrutiny, particularly by the Twitter/X account @houndcl, has surfaced multiple red flags that warrant careful examination. These concerns extend beyond interpretation and into the credibility of the data itself.

🚩 Zero Treatment Discontinuation Due to Adverse Events

The trial reported no adverse events leading to treatment discontinuation.

That is not a small discrepancy. It defies our expectations of immunotherapy across modern phase 3 trials.

In KEYNOTE-189 and KEYNOTE-407, roughly 14% of patients receiving chemo-immunotherapy discontinued treatment because of toxicity. In IMpower150, the discontinuation rate was 25%. Dual checkpoint blockade regimens such as CheckMate-227 report discontinuation rates closer to 18%. Across different PD-1/PD-L1 combinations, chemotherapy backbones, and global trial networks, double-digit toxicity-driven discontinuation is the norm.

Against that backdrop, observing zero treatment discontinuations due to adverse events in 210 advanced NSCLC patients would be an extreme statistical outlier, to put it lightly.

Conservatively assuming that the underlying discontinuation rate was 13.8%, as seen in KEYNOTE-189, the probability of observing zero events in 210 patients is one in a 37 trillion [(1–0.138)²¹⁰ ≈ 2.7 × 10⁻¹⁴].

Even if you assume this trial’s population was uniquely fit or adherent with therapy, and cut expected treatment discontinuation rates in half, the probability of seeing zero events in 210 patients remains about one in five million, statistically closer to impossibility than to a conceivable finding.

🚩 Zero Censoring in the First Twelve Months

A second concern is the absence of censoring during the first year of follow-up.

Trials are inherently messy. Patients transfer care. They miss scans. They withdraw consent. Sites close. Administrative data cutoffs occur. Even in tightly run randomized trials, early censoring is common.

To observe no censoring across an entire year in advanced NSCLC stretches plausibility. Survival datasets are not this clean. The absence of expected noise is not reassuring. It is anomalous.

When hazard ratios are dramatic, anomalous follow up is concerning.

🚩 Registry and Protocol Discrepancies

Public review of the cited ClinicalTrials.gov record reveals meaningful and unusual changes in how the study was described over time, including consequential changes to the study design itself.

On March 19, 2024, the registration was modified from an interventional randomized trial to an observational case-control study. Eleven days later, it was changed back to an interventional trial. Elsewhere, the described intervention shifted from immunotherapy to immunochemotherapy, and critical elements, including the definition of exposure timing and the primary outcomes, were revised.

Separately, the uploaded version of the Standard Analysis Plan dated January 2022 cited multiple publications from 2023 and 2024. That discrepancy does not invalidate the trial, but it does suggest either post hoc document assembly or inaccurate record keeping.

Registry updates are not inherently problematic. Trials evolve. Clerical errors occur. But, pre-registration is not bureaucratic theater. Major changes in study design, exposure definitions, and primary endpoints, particularly when occurring in combination, weaken confidence that the analytic plan was prespecified and locked before data were known.

When the reported treatment effect is this large, methodological stability is not optional. It is essential. If the design evolved in material ways, those changes must be transparent and reconciled with the reported findings.

🚩 Infusion Timing Inconsistent with Workflow or Random Assignment

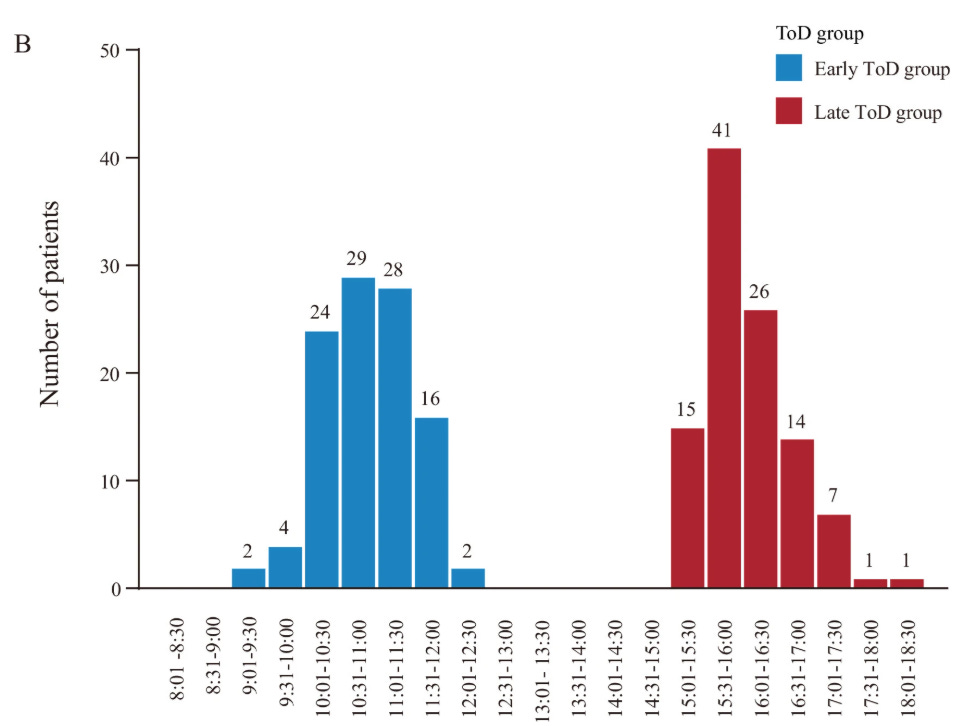

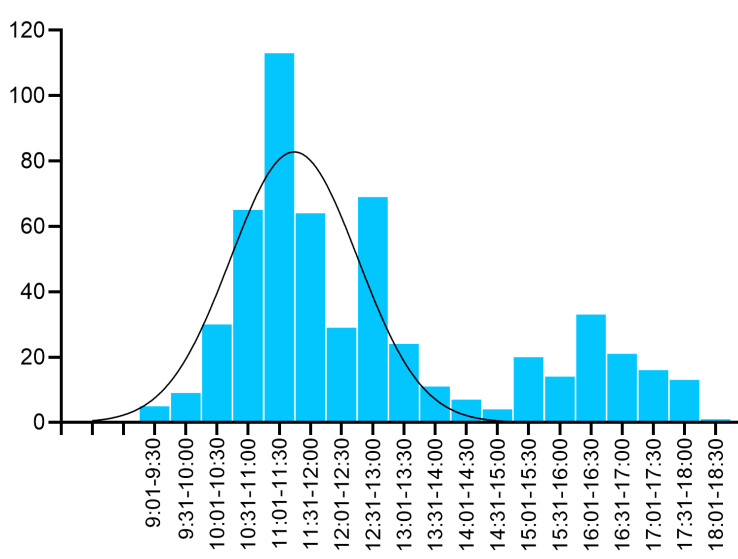

The trial also did not show an expected distribution of infusion times across clinic hours. Instead, infusion times cluster heavily between 9-12:00pm, then resume between approximately 3-6 pm with a striking three-hour gap in between. There are essentially incredibly few infusions between 12:00-3pm.

That pattern is not what one expects from routine infusion workflow. In most oncology centers, infusion times are spread out more throughout operating hours. If the exposure cutoff is 3pm, one would expect infusions on both sides of the boundary, including patients at 2:50, 2:55, 3:05pm, and so forth. Instead, the figure depicts two structurally distinct clinic blocks separated by a nearly three-hour void.

More concerning, the distribution of infusion times in this single center trial, conducted between 2022-2024, appears to differ from infusions time distributions previously documented from the same exact hospital between 2018 and 2023. While workflows can evolve, a dramatic restructuring of infusion scheduling, especially one that produces such a clean mid-day gap, would be unusual and warrants explanation.

Additional forensic observations suggest that infusion timing for participants in the morning group appears tightly clustered across cycles, often within roughly an hour of prior infusions, whereas the afternoon group displays much more variation, with some patients following predictable schedules and others spread broadly from 3-8pm.

If corroborated, that asymmetry raises the possibility that the early and late groups reflect distinct operational sessions rather than random variation around a biologic threshold.

If early and late correspond to different clinic blocks, potentially involving different staff, workflows, patient flow, or scheduling constraints, then the exposure may in part reflect clinic operations rather than circadian immunobiology.

More worrisome, the wider variation in the afternoon group suggests the possibility of non-random assignment driven by patient availability, reflecting differences in work schedules, caregiving demands, transportation access, and other social and structural factors. If those factors differ systematically between morning and afternoon patients, then timing may simply be standing in for differences in adherence, social support, or other determinants of outcomes.

That distinction is not cosmetic. It fundamentally alters causal interpretation. If timing reflects operational differences or socioeconomic status rather than circadian biology, the observed survival difference may reflect confounding rather than chronotherapy.

The Responsible Interpretation

If this trial is valid, it is transformative. A no-cost scheduling change that doubles survival would reshape oncology practice overnight!

If it is not valid, it risks misleading clinicians, distorting research agendas (like the amyloid hypothesis for Alzheimer’s dementia), and eroding trust.

The solution is verification. Release de-identified patient-level time-to-event data with exact infusion timestamps and censoring indicators. Publish the locked statistical analysis plan and version history. Provide the code used to generate the hazard ratios and survival curves. Clarify definitions of discontinuation and censoring.

When the only knob you turn is the clock and survival doubles, extraordinary claims don’t earn applause, they earn scrutiny.

Clearly a lot of thought went into this post. A closer look certainly reveals some red flags. Did you contact the authors or consider a letter to the editor? Before calling for a retraction I’d want to hear their response. I’m assuming the reviewers may have already posed some of these questions..